Suhaib Abdurahman

About Me

I am a social psychologist with a strong engineering background. I originally worked on applying machine learning to control systems and process optimization (BS/MS Process Engineering, TU Berlin), but became increasingly interested in using these methods to study human behavior (BS, FU Berlin; MA/PhD, University of Southern California).

I now work as a researcher and Applied Scientist at the intersection of Social Psychology and Machine Learning. My work focuses on applying psychological theory to build more capable AI systems and on using computational methods to uncover the mechanisms underlying human behavior.

Research Interests and Methods

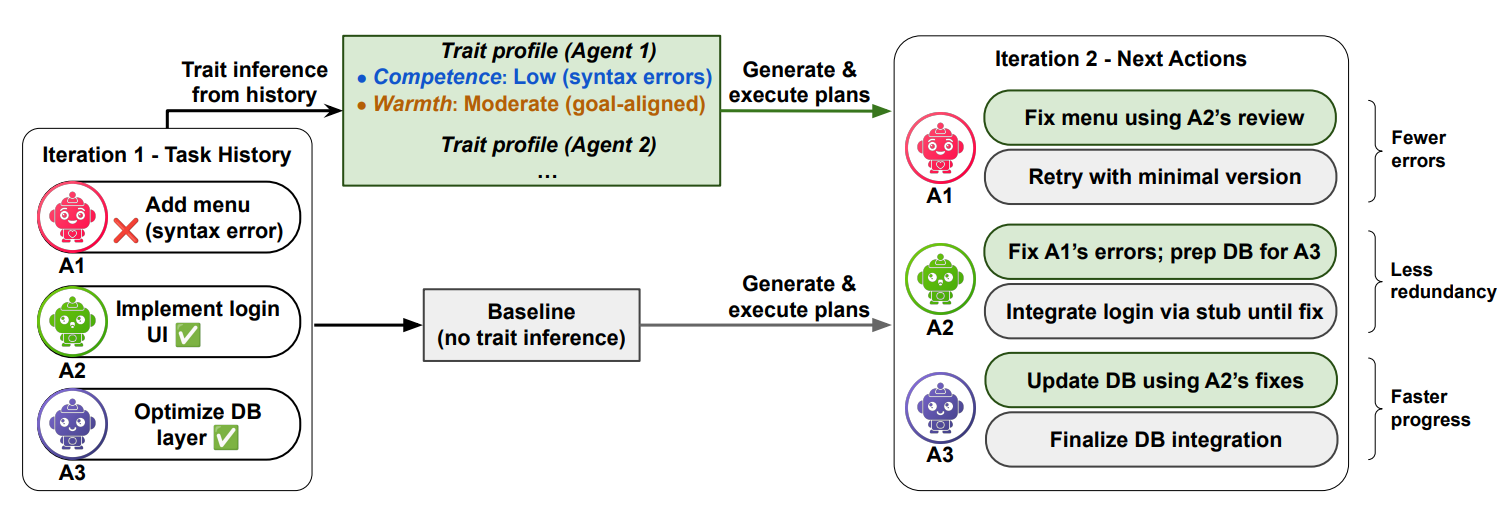

- Socio-Cognitive & Agentic AI: Building more adaptive and robust AI agents by drawing on psychology and cognitive science to improve how agents coordinate and collaborate (e.g., task allocation, instruction-following, adversarial detection), boosting performance and reliability across both social and non-social settings.

- Theory-Driven Evaluation: Developing benchmarks and evaluation frameworks grounded in psychological and cognitive theory to move beyond surface-level metrics and assess the latent cognitive and social capabilities of AI systems.

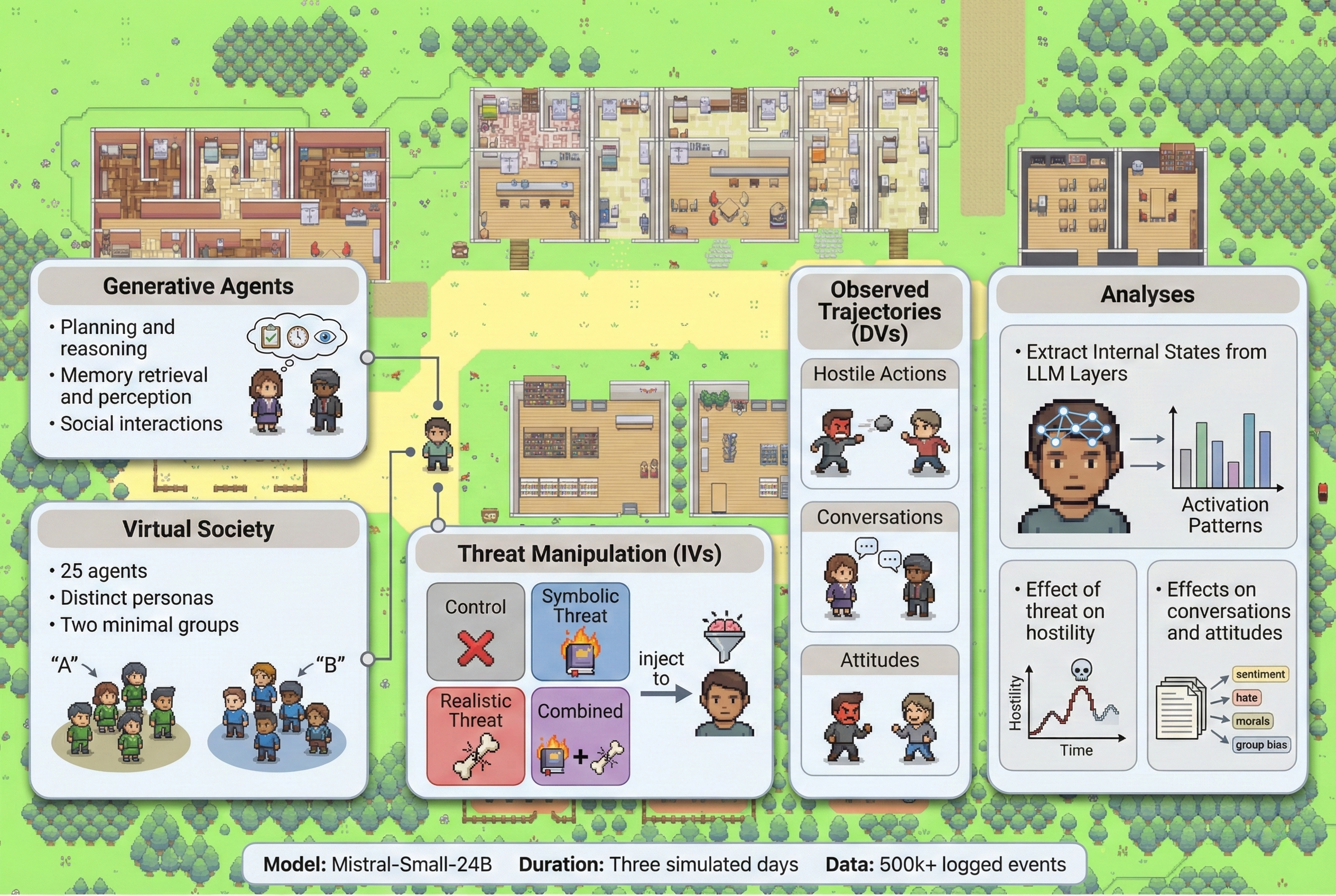

- Social Simulations:: Using Agent-Based Modeling (ABM) and LLM-based simulations to study emergent dynamics in human and AI systems alike—for example, uncovering under what conditions humans and/or agents cooperate, compete, or sabotage.

- Computational Social Science: Investigating how human values, traits, and perceptions drive complex social behaviors like intergroup conflict, misinformation sharing, and digital well-being. I combine behavioral experiments with NLP and representation learning to extract psychological signals and build predictive models of human behavior.

Selected Publications [Google Scholar]

-

Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics (ACL 2026), Main Track.

Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics (ACL 2026), Main Track. -

-

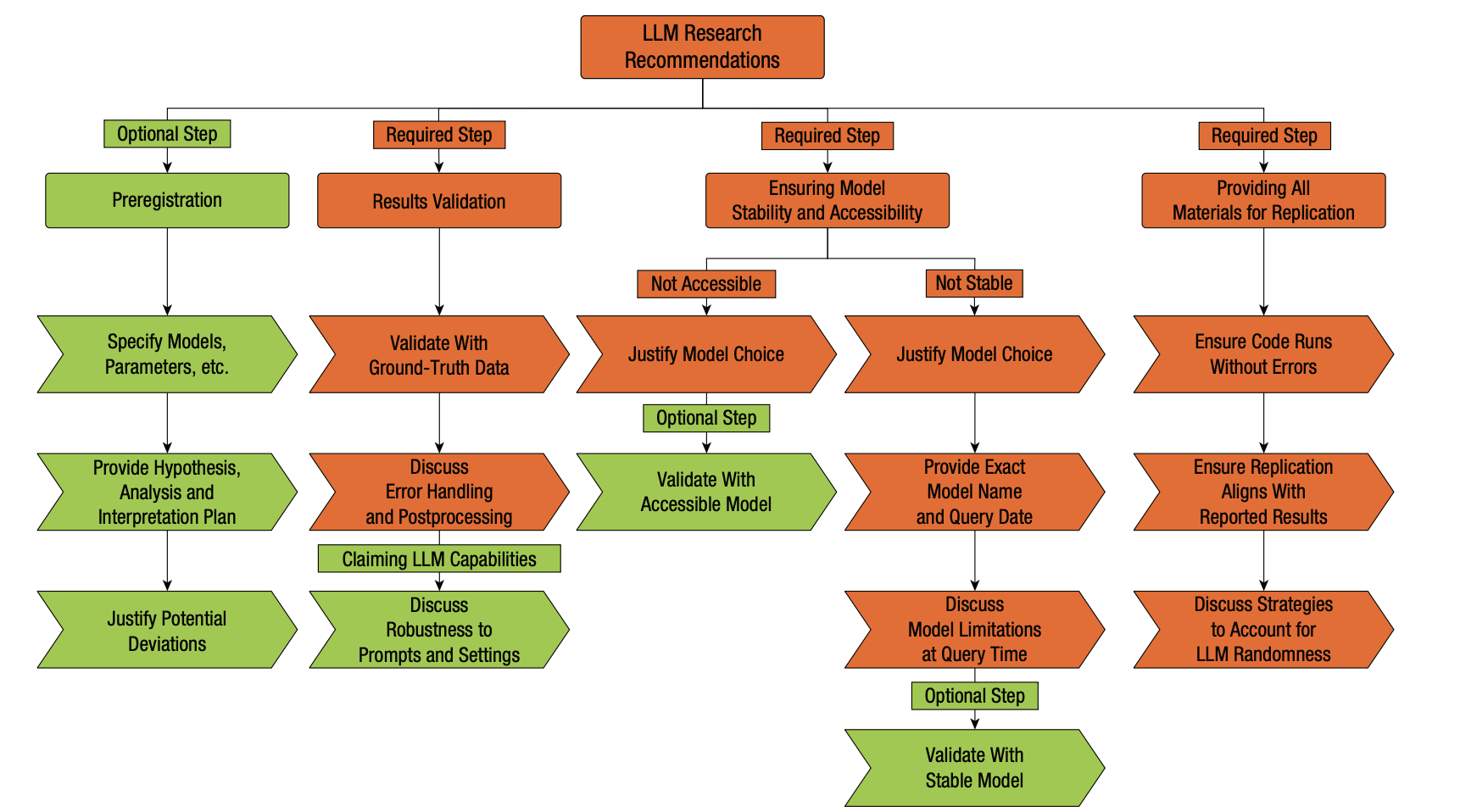

Advances in Methods and Practices in Psychological Science (AMPPS), 2025.

Advances in Methods and Practices in Psychological Science (AMPPS), 2025. -

PNAS Nexus (PNAS NEXUS), 2024.

PNAS Nexus (PNAS NEXUS), 2024. -

Journal of Personality and Social Psychology (JPSP), 2023.

Journal of Personality and Social Psychology (JPSP), 2023. -

Findings of the Association for Computational Linguistics (EMNLP 2020).

Findings of the Association for Computational Linguistics (EMNLP 2020).

Selected Conference Presentations

- Abdurahman, S., Ishii, E., Margatina, K., Bhargavi, D., Sunkara, M., Zhang, Y. (2026). Explicit Trait Inference for Multi-Agent Coordination. Association for Computational Linguistics (ACL) 2026 Main Track

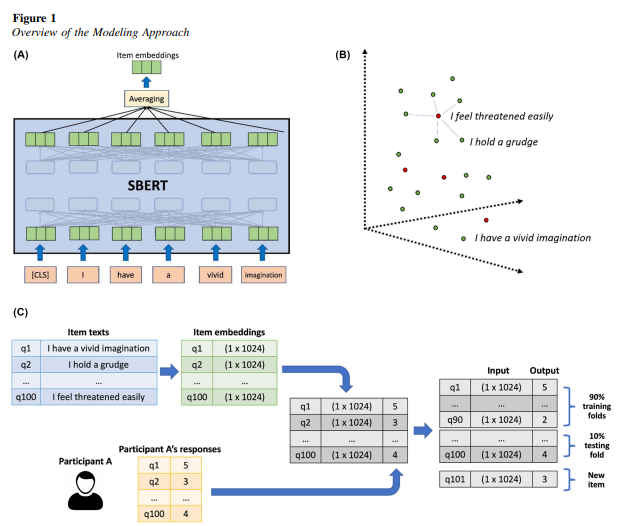

- Abdurahman, S., Vu, H., Zou, W., Ungar, L., Bhatia, S. (2024). A Deep Language Approach to Personality Assessment: Generalizing Across Items and Expanding the Reach of Survey-Based Research. SPSP

- Abdurahman, S., Vu, H. (2023). Investigating Social Inferences in Large Language Models: Advancements and Biases. Psychology of Technology

Ongoing Academic Projects

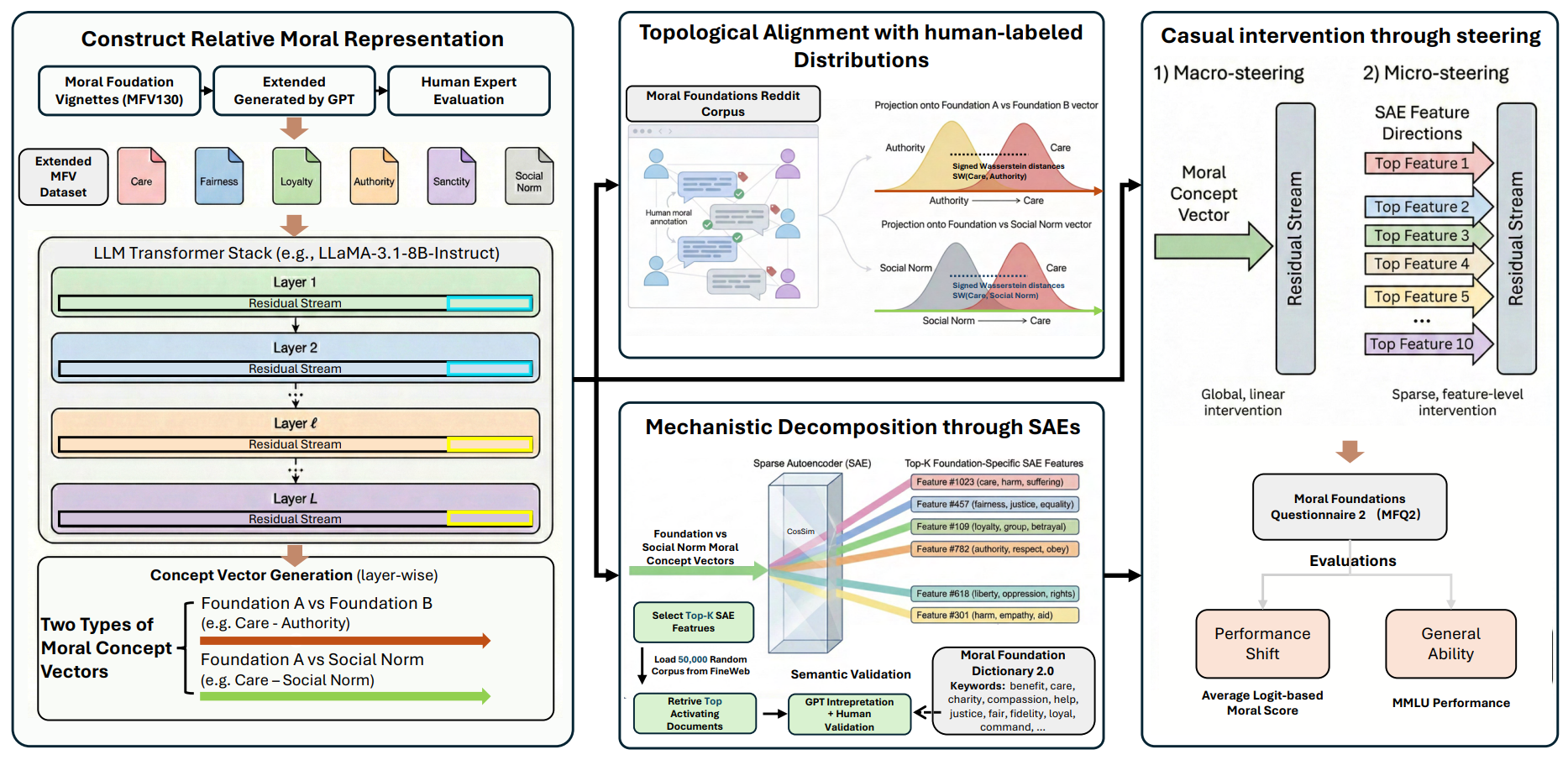

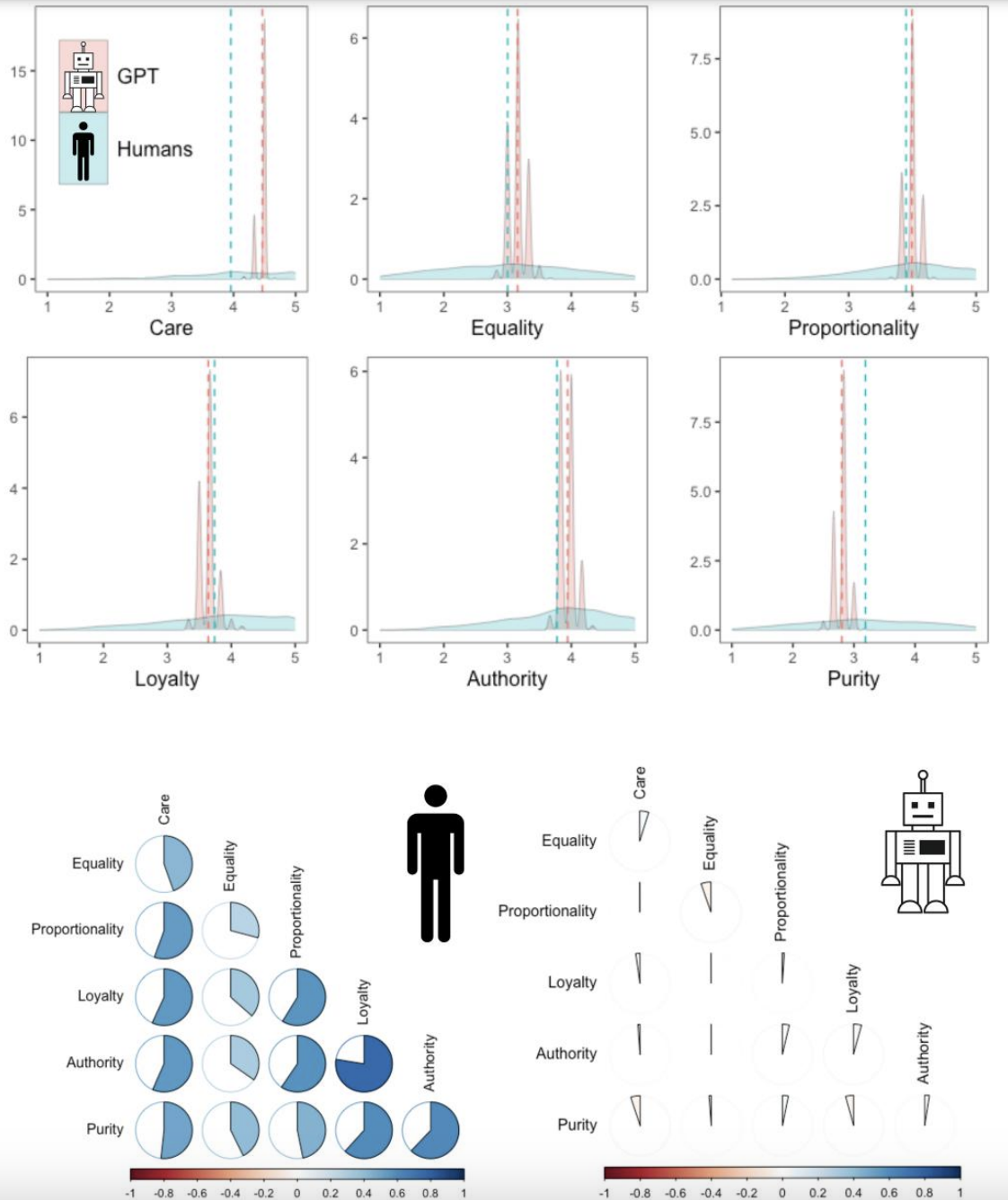

- Modeling Moral Values and Decision-Making: Investigating how context, categorization, concept representations, and reward-based learning shape moral judgments and behavior.

Side Projects

-

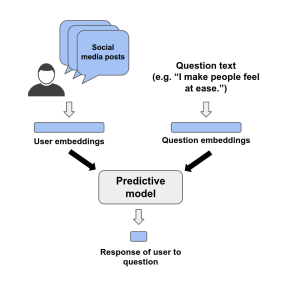

Social Inferences in Language Models: Comparing small encoder-based models (e.g., BERT) and large generative models (e.g., GPT-3.5, GPT-4) in their ability to make social inferences from psychological questionnaires. Generative models were more accurate but also more biased and less transparent, highlighting trade-offs between accuracy, bias, and interpretability.

-

Explaining Explainability: Advocating for interpretable machine learning in the behavioral sciences by clarifying misconceptions about “black box” models. We demonstrate that interpretability can coexist with predictive accuracy and that post hoc explanations can advance both prediction and understanding of complex behavior.

Services

Conference Review

Journal Review

Nature Computers in Human Behavior Advances in Methods and Practices in Psychological Science Journal of Personality and Social Psychology

Contact

Address: 3620 McClintock Ave, Los Angeles CA 90089

Office Location: SGM 604

Email: sabdurah@usc.edu